Direct mapped cache example7/6/2023

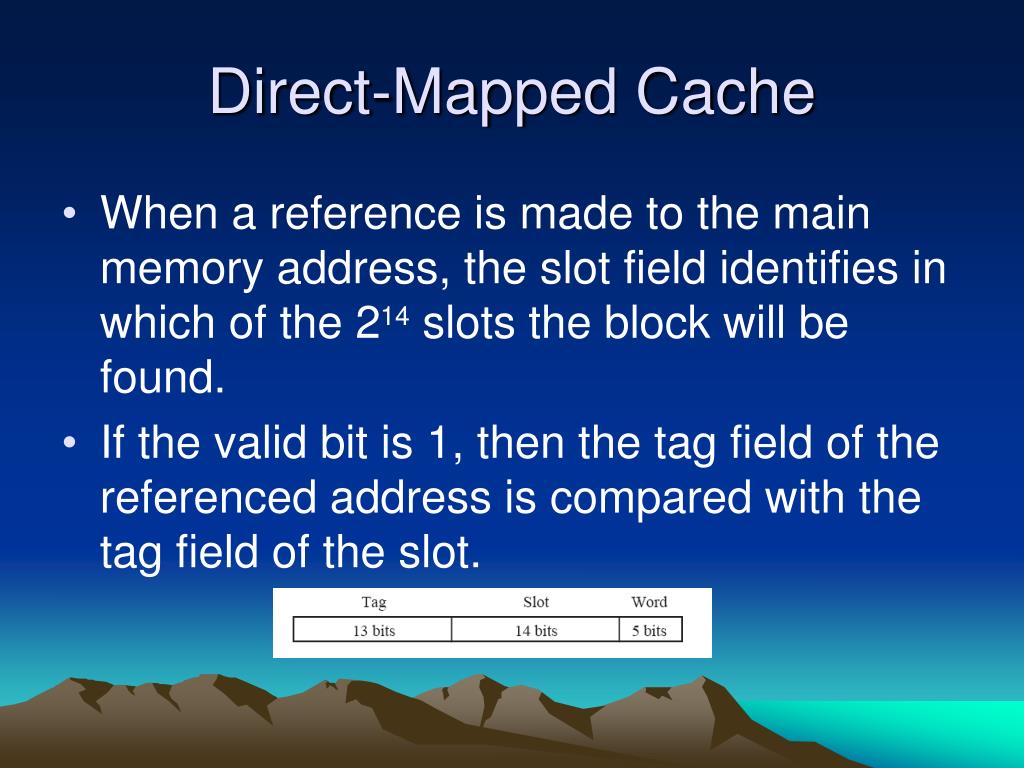

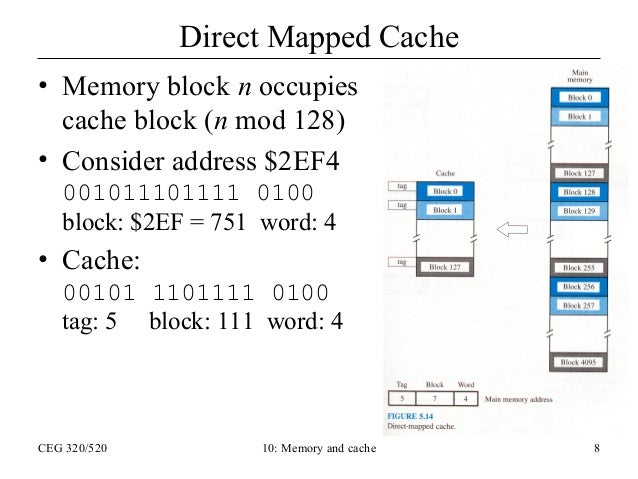

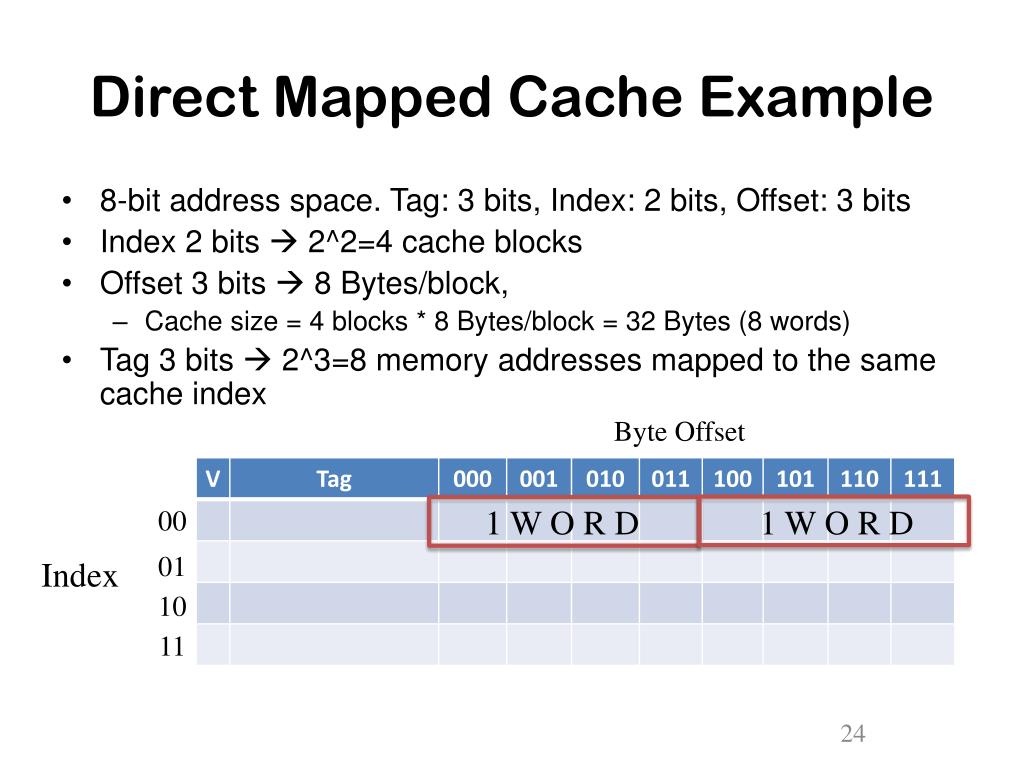

Where w = number of bits needed for representing block size, cl = number of bits needed for representing number of lines LRU Policy: In LRU Cache Implementation, we remove the least recent block and add the most-recently accessed block in it’s place (at the time of reading/writing to the cache).Ī block of the main memory can come into only one specific cache line (irrespective of other cache lines being empty), and that cache line is given by B mod cl where B is the block number and cl is the number of cache lines.įor example, in a cache with cl = 4, block no 0 will come into cache line number 0 only and not any other cache line(because 0 mod 4 = 0).ĭue to its specific nature, this cache has higher rates of cache misses.Ī Direct Mapped physical address can be split up into Tag, Line-Offset and Word-Offset. We replace and evict by using the Least Recently Used (LRU) Policy. NOTE: If an address already has data written and we again write on that address, the data gets overwritten by the new data entered. In case any or both of them are full, we will use the LRU replacement policy to replace the least recently used blocks in the caches with the new block. If the entered address doesn’t exist in either of the two caches, we will update the two caches with the new block (with the data) corresponding to the address entered.If the entered address doesn’t exist in the L1 cache but does exist in L2, the data will be written in the L2 cache and that updated block will be added to L1.If the entered address exists in Level 1 cache (HIT), the data will be written to both L1 and L2 Cache (Write through policy).There can be 3 cases while writing to a cache: We adopt the Write-through cache policy wherein data is simultaneously written to Level 1 and Level 2 of the cache memory unlike Write-back policy wherein data is written to the Lower Level (L2) at a later stage.For writing to the cache, the user has to enter an address as described above along with data that is to be written.

NOTE: If there is no data in the cache at that address, we print a message “EMPTY!” implying that we are trying to read data at an address that has never been written onto, i.e. If the address doesn’t exist in either of the two levels (MISS) implying that the block corresponding to this address got evicted due to addition of some other block or maybe never existed in the cache), the block belonging to that word (whose address is given) is again added back to the cache.If the entered address exists in Level 2 of the cache (HIT), the data on that address will be read off and the corresponding block will be added to L1. If the entered address exists in Level 1 of the cache (HIT), the data on that address will be read off.

That is why we keep close addresses together in a block.įor a valid address, there can be 3 cases while reading a cache: SPATIAL LOCALITY The address of data near to the address of data being currently used may be fetched soon in future. LRU replacement scheme is based on Temporal Locality.

A multilevel cache with 2 levels (Level 1 and Level 2) is implemented where L1 is a subset of L2, i.e, L2 stores all the contents of L1 (however this is not true vice versa).Ĭache operations are based on locality of reference: TEMPORAL LOCALITYĭata fetched now may be fetched again in future. The word length of the machine is 16 bits which implies that any address with an integer value from 0 to 2**16 - 1, i.e., 0 to 65535 can be entered. Mathematically,Ĭache size = No of cache lines * size of one cache line A cache is made up of cache lines which in turn are made up of words. Cache memory is a smaller and faster type of memory located quite close to the CPU and holds the most recently accessed data or code.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed